Introduction

Today we're launching Catalyst, a platform for building continuously self-improving AI models.

Catalyst is a full-stack platform that combines monitoring, evaluations, training, and deployment into a single product. It's built specifically for teams that want to optimize production AI applications. It is not a general-purpose AI research tool.

The core idea is simple: instead of constructing synthetic environments that try to approximate reality, Catalyst samples production traffic from your application and uses it to train models via supervised fine-tuning. Your production data is your training environment.

Today, Catalyst is capable of training Specialized Language Models that match or exceed frontier-quality at up to 95% lower cost.

How Catalyst Works

Catalyst installs into your repository like an ordinary LLM tracing tool. It works with existing OpenAI- and Anthropic-compatible providers – just swap your base URL and add a few request headers. The Catalyst Gateway sits between your application and your model provider recording, analyzing and storing production traffic.

Add Catalyst to your project with a single command. From your project root:

npm install -g @inference/cli && inf instrumentThis command uses an AI coding agent to analyze your codebase and make the changes necessary to route requests through Catalyst with the correct metadata. From there, you run your application normally. Every LLM call is captured and appears in the Catalyst dashboard.

import os

from openai import OpenAI

client = OpenAI(

base_url="https://api.inference.net/v1",

api_key=os.environ["INFERENCE_API_KEY"],

default_headers={

"x-inference-provider-api-key": os.environ["OPENAI_API_KEY"],

"x-inference-provider": "openai",

},

)

response = client.chat.completions.create(

model="gpt-4.1",

messages=[{"role": "user", "content": "Hello, world!"}],

)

print(response.choices[0].message.content)For more information on getting started, refer to our documentation.

Catalyst Workflow

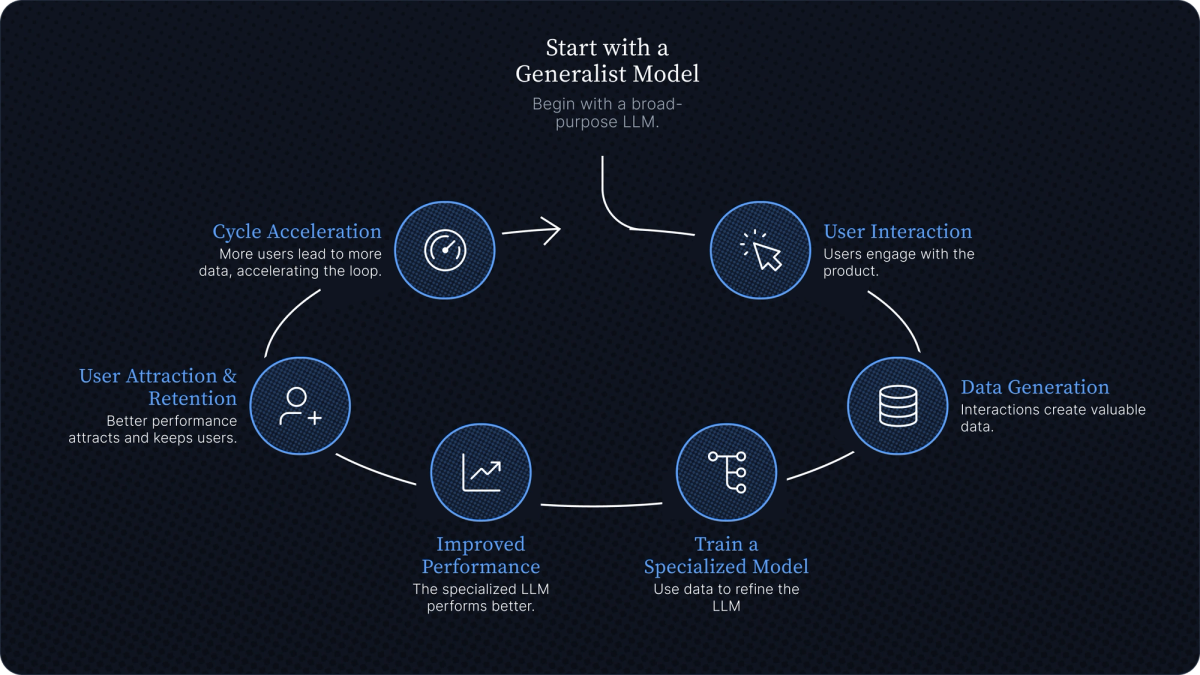

Once traffic is flowing, the workflow is quite simple:

Build datasets from traffic. Catalyst turns your recorded LLM calls into training and evaluation datasets. Real traffic means your training data matches the actual distribution of scenarios your model will encounter.

Evaluate with LLM-as-judge. You can't improve what you can't measure. Catalyst helps you build evaluation suites starting from the data you already have. You establish a performance baseline for your current model, then measure every subsequent training run against it.

Train. Catalyst Recipes offer preconfigured training rules with a base model, optimized parameters, and compute resources. The platform handles base model selection, hyperparameters, and training infrastructure. Evaluations run automatically before, during, and after training to measure quality.

Deploy. Trained models deploy to dedicated GPU infrastructure. Switching your application to a custom model is a one-line code change. The model weights are fully owned by you; deploy on our infrastructure, or host in your own private VPS.

From here, the cycle continues, with Catalyst managing the training loop. It ingests fresh production data, generates training and evaluation datasets, runs training jobs, and compares resulting models against the production baseline. When new models are ready, they’re staged for final review and deployment.

Catalyst in Production

Catalyst training loops vary in cost depending on the size of the base model you’re training and the amount of data required to train. Training runs range from $25 up to several thousand dollars. Catalyst is already powering custom models in production:

- Self-improving coding models for proprietary programming languages, used by hundreds of engineers at a public financial institution

- Data extraction models that match Opus 4.6 performance with 150ms p50 end-to-end latency at 5% the cost

- Calorie estimation models that outperform frontier models on quality, latency, and cost

- HTML-to-JSON extraction models like Schematron-8b, used by some of the largest web scraping companies in the world

- Deep research agents that surpass the current Pareto frontier on cost-to-accuracy.

We’ll be sharing more stories about our customers and the models they have trained using Catalyst over the coming weeks.

Catalyst vs. RL

The dominant post-training story today starts in the wrong place. First you spend weeks building an environment that tries to simulate the real work your model will face after deployment. Then you spend more time building rubrics, hardening evals, and trying to stop the model from learning the wrong lesson. After training, you discover the model has found a way to optimize for the score instead of the job, and the process begins again.

This is expensive, and slow, and brittle in exactly the way engineers hate: you can do everything right inside the sandbox and still learn almost nothing about how the system will behave once it hits production. AI systems cannot be optimized in a vacuum. If the goal is to improve a production system, the source of truth should be production itself.

We built Catalyst because we think the current paradigms promoted by RL are unnecessarily difficult, low yield, and out of reach for most teams. If you're running an AI application in production, you're already generating the data you need to train a frontier-quality model for your system. The tools required to capture this data, evaluate, and train on it, without building an RL environment, have simply not existed in a single place until today.

Available Today

Catalyst is available in public beta today. We are covering training and deployment costs during the beta, so if you are already running an app or agent in production, you can try the full observe-train-deploy loop for free.

We have a lot in store for Catalyst. Over time, Catalyst will take on more of the improvement loop, shortening the distance from live traffic to a better model. If your AI system is already in production, you already have enough to start.

Sign up for an account at inference.net or check out the quick start guide for detailed instructions on how to get started.

Meet with our research team

Schedule a call with our research team to learn more about how Specialized Language Models can cut costs and improve performance.