Introduction

Today we're releasing Schematron V2, the next generation of our specialized HTML-to-JSON extraction models. If you're new here: Schematron is a family of small, purpose-built models that transform messy HTML into clean, structured JSON, delivering frontier-level extraction quality at a fraction of the cost and latency of large general-purpose LLMs.

Schematron V1 already powers massive extraction workloads for companies operating on web-scale data in production today, and we’re excited to release new variants that push performance even further.

Schematron V2 introduces two new models: Schematron V2 Small and Schematron V2 Turbo. Schematron V2 Small nearly matches the quality of our first-generation 8B model while keeping 3B-like speed. Schematron V2 Turbo is optimized for maximum throughput, processing 4.14 requests per second on a single H100 (a 2.5x improvement over the original Schematron 3B) while still exceeding the quality of the previous generation 3B model.

We trained Schematron using Catalyst, our LLM fine-tuning engine built specifically for training high-performance task-specific LLMs. Both Schematron models are available today through our serverless API on Inference.net.

Create a free account or check out our documentation to get started.

Schematron API

Call Schematron V2 through the Inference.net serverless API using the code below. Make sure to set your INFERENCE_API_KEY and the HTML variable to your HTML document.

import os

from pydantic import BaseModel, Field

from openai import OpenAI

class Product(BaseModel):

name: str

price: float = Field(

..., description=(

"Primary price of the product."

)

)

specs: dict = Field(

default_factory=dict,

description="Specs of the product.",

)

tags: list[str] = Field(

default_factory=list,

description="Tags assigned of the product.",

)

HTML = '<YOUR_HTML_DOC>'

client = OpenAI(

base_url="https://api.inference.net/v1",

api_key=os.environ.get("INFERENCE_API_KEY"),

)

resp = client.beta.chat.completions.parse(

model="inference-net/schematron-v2-small",

messages=[

{"role": "user", "content": HTML},

],

response_format=Product,

)

print(resp.choices[0].message.parsed.model_dump_json(indent=2))Why This Matters

When we launched Schematron V1 in September 2025, we showed that small, specialized models could match frontier LLMs at HTML extraction while being 40-80x cheaper. The response from the community validated what we already knew: teams everywhere are trying to extract structured data from the web at scale, and cost has been the bottleneck.

With V2, we pushed the quality-to-size ratio even further. You can get the speed of much smaller models while maintaining 8B-like cost. And if throughput is your priority, the Turbo variant lets you process pages faster than ever before.

Quality: Closing the Gap with 8B

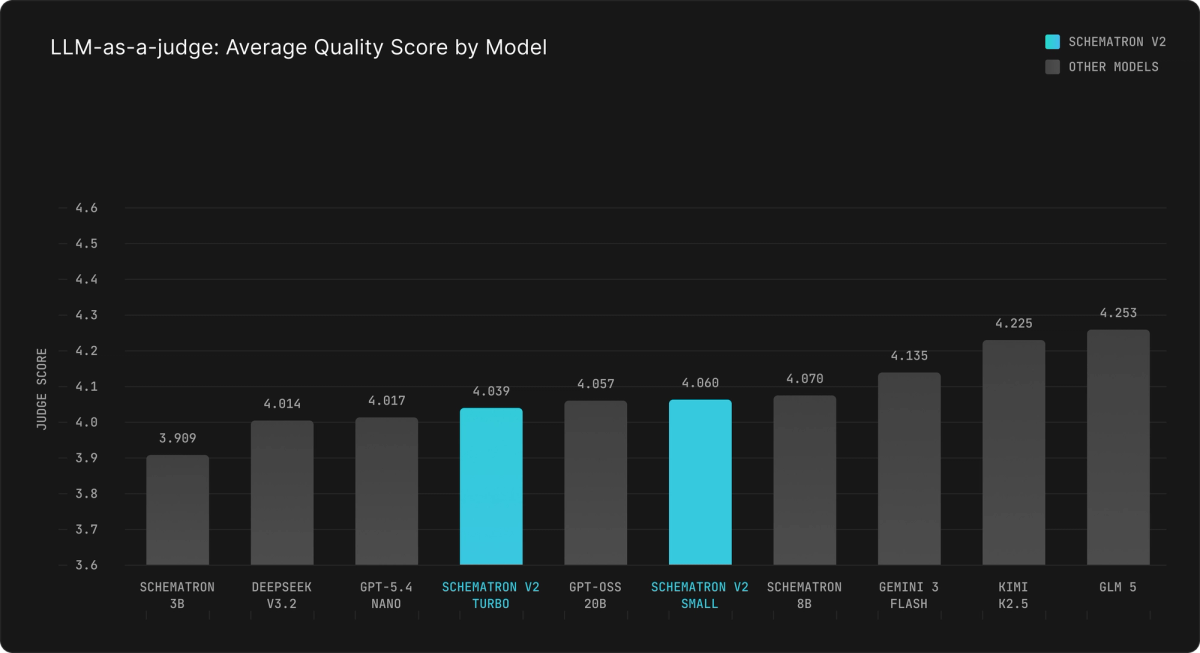

We evaluated Schematron V2 using the same LLM-as-a-judge methodology from our original launch, with GPT 5.4 grading extractions on a 1-5 scale.

Schematron V2 Small scores 4.060, putting it within striking distance of the original Schematron 8B (4.070) and meaningfully ahead of the first-generation Schematron 3B (3.909). For most production workloads, the difference between V2 Small and 8B is negligible.

Schematron V2 Turbo scores 4.039, which is still a significant jump from the original 3B, and it achieves this while being optimized for speed rather than quality.

To put this in perspective: both V2 models outperform DeepSeek V3.2 and GPT-5.4 Nano on this benchmark, both of which are substantially larger models.

Throughput: 2.5x Faster Than V1

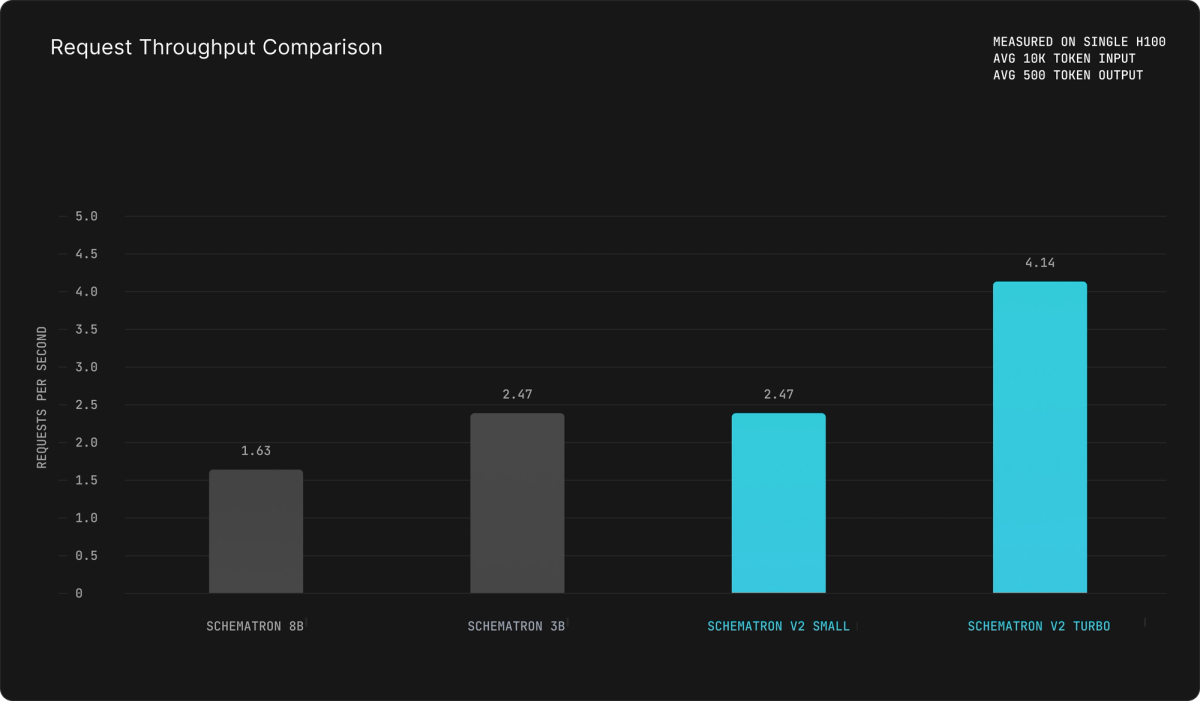

Schematron V2 Turbo is built for high-volume workloads where every millisecond and every dollar counts.

On a single H100 with 10k input tokens and 500 output tokens:

Schematron V2 Small: 2.47 requests/sec

Schematron V2 Turbo: 4.14 requests/sec

Schematron 8B (V1): 1.63 requests/sec

Schematron 3B (V1): 2.47 requests/sec

The Turbo model achieves 4.14 requests per second, a 2.5x improvement over the original 8B and nearly 1.7x over the previous 3B. For crawling and scraping pipelines that are latency-sensitive (think financial document parsing for live trading, or real-time price monitoring), this speed advantage compounds quickly.

Improving LLM Factuality: SimpleQA Results

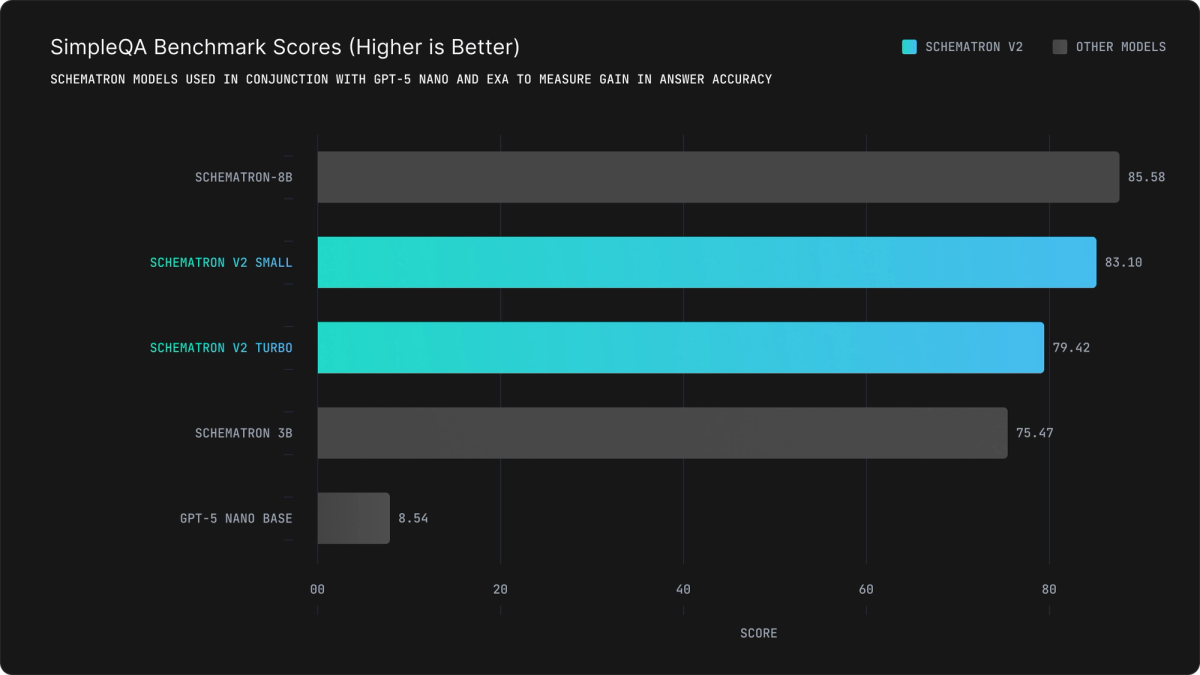

We also re-ran our SimpleQA benchmark, which measures how well Schematron improves factual accuracy when used as an extraction layer in a web-search-augmented pipeline (using GPT-5 Nano as the base model with Exa search).

Schematron V2 Turbo: 79.42

Schematron V2 Small: 83.10

Schematron 8B (V1): 85.58

Schematron 3B (V1): 75.47

GPT-5 Nano (no search): 8.54

Schematron V2 Small reaches 83.10, a massive 7.6-point improvement over the first-generation 3B and only 2.5 points behind the 8B model. Even the Turbo variant hits 79.42, nearly a 4-point gain over the original 3B while processing pages significantly faster.

These results reinforce our core finding: structured extraction fundamentally changes the economics of web search for LLMs. Instead of passing entire raw HTML pages into a large model (tens of thousands of tokens per query), Schematron extracts only the structured data that matters, making web-augmented factuality viable at scale.

Pricing

Schematron V2 is priced to make internet-scale extraction accessible:

Schematron V2: $0.10/1M input tokens – $0.25/1M output tokens

Schematron V2 Turbo: $0.06/1M input tokens – $0.15/1M output tokens

At these prices, processing 1 million pages per day (assuming 10k input + 8k output tokens per page) costs roughly $700/day with V2 3B or $440/day with V2 3B Turbo. For high-volume workloads, additional discounts are available through dedicated instances. Meet with us to learn more about dedicated capacity.

What's Next: Schematron Pro

We're not stopping here. Schematron Pro is in development, targeting even higher accuracy than the current 8B model while maintaining similar request throughput. For teams that need the absolute best extraction quality and are willing to pay a premium over the 3B models, Pro will be the answer. Stay tuned.

Getting Started

Both V2 models share the same core capabilities as the original Schematron family: a 128K token context window and strict JSON mode guaranteeing 100% schema-compliant output. Define your schema with Pydantic, Zod, or JSON Schema, pass in raw HTML, and get back validated, type-safe data.

For more information on usage, please refer to our documentation.

The Schematron V2 Small and V2 Turbo model weights will remain closed-source for now, available exclusively through our serverless API. The original open-source Schematron 3B and 8B models remain available on Hugging Face.

Start Extracting

We'd love to hear what you're building. Get started with these resources:

Create Account: Try the Inference.net API with $10 free credits

Documentation: Read the Schematron docs to learn how to call the API

Schedule a meeting: Meet with our team to learn about dedicated instances and volume pricing

We can't wait to see what you build with even faster, even cheaper extraction.

Meet with our research team

Schedule a call with our research team to learn more about how Specialized Language Models can cut costs and improve performance.