Case Studies / Gravity

How Gravity Ads Trains Specialized LLMs to Power Their AI-Native Ad Network

Gravity Ads serves native, intent-aware sponsored suggestions inside AI chatbots and assistants, turning conversational traffic into a new advertising surface.

Outcomes

Company Overview

Gravity is an AI-native ad network that connects advertisers with users by placing targeted product suggestions into LLM-powered applications. When a user shows intent to purchase, or is asking about a problem where a specific product might be the solution, Gravity matches that against its advertiser inventory and surfaces a product suggestion inline in real time.

For every request the user makes, Gravity has to semantically analyze what the user is asking for, determine relevancy to the current ad network, and serve a high-quality advertisement integrated into the AI response in a way that feels natural.

Results at a Glance

Using the Catalyst platform from inference.net, Gravity replaced its general-purpose 70B model on Cerebras with a specialized 1B model trained specifically for its production workload.

The new model matched or exceeded the previous model on evals, reduced p99 latency from 1,992 ms to 351 ms, and cut inference costs by roughly 10x. The full migration, from data capture to training, evaluation, deployment, and launch, took less than a week.

Challenges

Every Gravity ad placement is governed by three competing constraints: cost, latency, and response quality. Improving one typically comes at the expense of the others. The challenge was finding an inference setup that could support millions of requests per day at low cost and latency without sacrificing the quality needed to serve responses that feel native to the conversation and stay relevant to user intent.

As an initial solution, Gravity hosted a general-purpose 70B parameter model on Cerebras to take advantage of its custom hardware for fast inference. This solution was feasible but there were still several core issues.

-

Latency remained high. Gravity's p99 latency was still close to 2 seconds, which created a risk that relevant ads would take too long to render to the end user.

-

Gravity wanted a more hands-on technical partner. The team wanted help identifying the right optimization path, evaluating model quality, and moving the optimized setup into production.

-

Inference costs were significant at production scale. With millions of requests per day, even a general-purpose open-source model created meaningful ongoing infrastructure cost.

Solution

Gravity approached Inference.net seeking a solution that could lower latency and cost without sacrificing quality. Inference.net recommended using Catalyst to train and deploy a smaller fine-tuned model built specifically for their task. Typically for highly specific tasks like this, a smaller fine-tuned model is not only cheaper and faster but, with a good training framework, can often match or outperform much larger general-purpose models on the specific task it was trained for. Catalyst was built to support this workflow end to end: capture production traffic, create training and evaluation datasets, fine-tune task-specific models, evaluate quality, and deploy them on dedicated infrastructure.

Integration with the Catalyst Gateway was the first step, requiring the addition of a few lines of code to Gravity's existing LLM requests. This enabled the Catalyst platform to gather metrics on the live production data while the proxied 70B model on Cerebras kept handling the requests and serving the responses in production with less than 10ms of overhead.

"What sold me was that we didn't have to commit to anything. We pointed our LLM calls at the gateway, kept running on Cerebras, and just let Inference.net see our actual production traffic. They came back with both a trained model and the eval results showing it matched the current model. We pointed at a gateway and got back a deployable model." — Zach Oldham, CEO, Gravity

Once enough request and response pairs were recorded, a training run was launched on Catalyst using Nvidia GPUs and Inference’s internal fork of Megatron Bridge. The new base model that was chosen for this task was a 1B model that had 70x fewer parameters than Gravity’s existing setup.

After training, Catalyst's LLM-as-a-judge evals were used to compare the 70B and the fine-tuned 1B model against different eval sets and rubrics to ensure that response quality was maintained. Having been trained specifically on production data with real edge cases, the custom model was able to perform at a higher quality than the previous model across the different evaluations.

With the model ready for production, Inference.net deployed it through Catalyst on dedicated infrastructure sized for Gravity's workload using standard Nvidia GPUs.

-

Base model: 1B parameters, 70x smaller than Gravity's previous 70B model.

-

Training: Inference.net's internal fork of Megatron Bridge on Nvidia H100s, using production data captured via Catalyst Gateway.

-

Evaluation: LLM-as-a-judge across multiple eval sets and rubrics, with the fine-tuned 1B matching or exceeding the 70B on every test.

-

Deployment: Dedicated infrastructure sized for Gravity's workload, handling millions of requests per day.

Gravity now had a specialized 1B model that could handle their production volume without relying on the previous 70B setup on Cerebras.

"I'll be honest, I didn't think a 1B was going to hold up. We'd tried smaller models before and the quality always fell off. The Inference.net team trained one that matched our 70B model on every eval we tested." — Leo Martinez, CTO, Gravity

Once Gravity had decided to move forward with Inference.net, the full switch took less than a week. In that time, the Inference.net team helped collect production traffic, train the custom model, run evals, deploy it on dedicated infrastructure, and move Gravity over to the new endpoint.

Throughout the process, the Gravity team had direct access to the Inference.net team in Slack for fast support, technical questions, and hands-on guidance. Instead of being left to figure out model training and deployment on their own, Gravity had a partner helping them debug, evaluate, and optimize the setup in real time.

Results

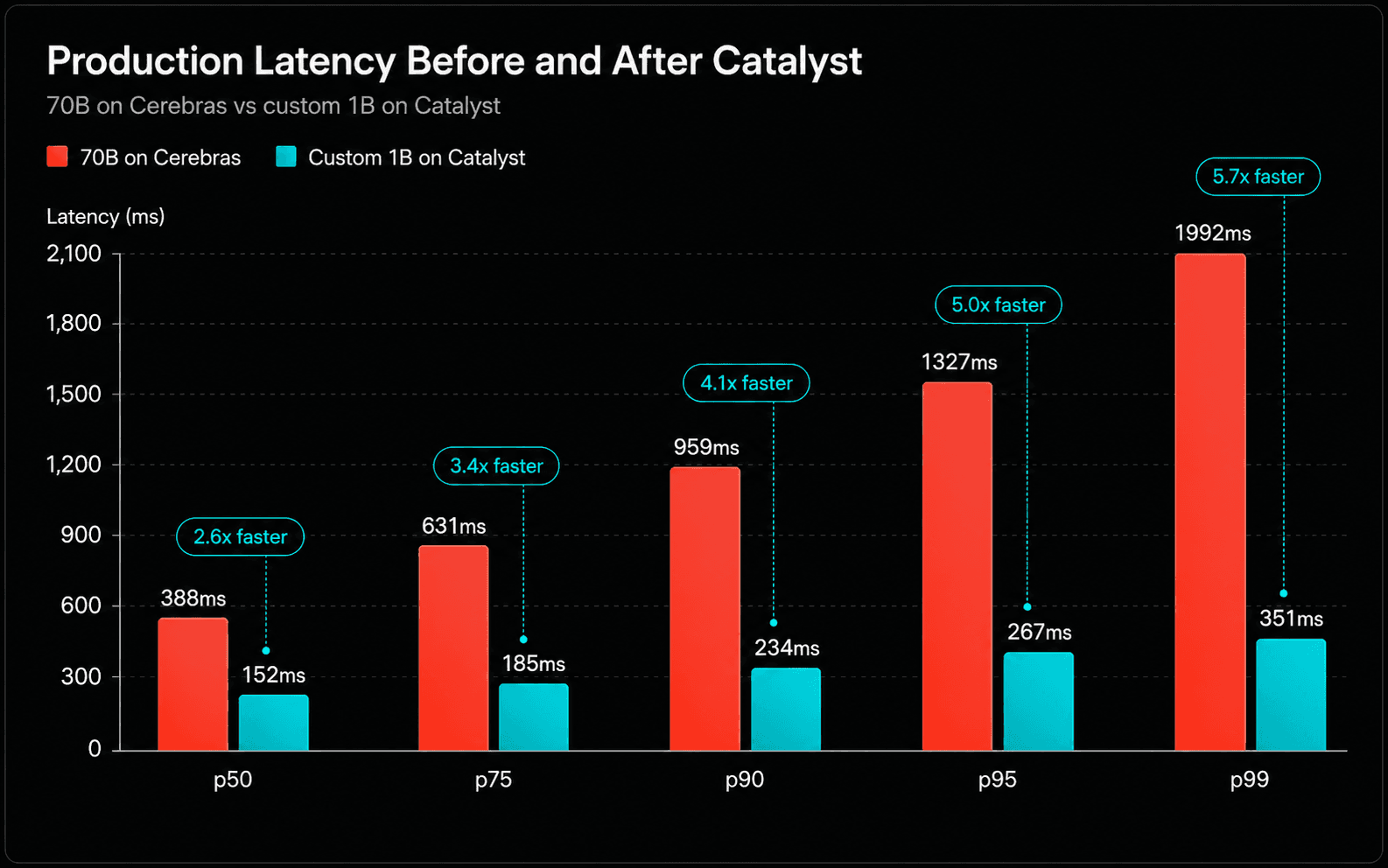

After deployment, Catalyst's built-in observability metrics were used to benchmark the new model against the previous solution. The fine-tuned model improved latency at every percentile:

As shown by the graph, the latency metric results were:

-

p50: 388ms → 152ms (2.6x faster)

-

p75: 631ms → 185ms (3.4x faster)

-

p90: 959ms → 234ms (4.1x faster)

-

**p95: **1327ms → 267ms (5.0x faster)

-

**p99: **1992ms → 351ms (5.7x faster)

The average payload sizes for this benchmark were 3.08 KB input and 1.07 KB output with the tail latency as the biggest win. In an AI-native ad network, ads that arrive late are ads that the user might never see. Bringing p99 from nearly 2 seconds down to 351ms means more ads seamlessly land while the user is still in-conversation. This helps improve engagement and conversion for advertisers and creates a more polished product experience. Furthermore, moving off of Cerebras to a smaller specialized model on Catalyst enabled Gravity to cut inference cost roughly 10x at production volume due to the dramatic decrease in model size and compute required.

For Gravity, Catalyst removed the typical tradeoff between cost, latency, and quality. By using production traffic to train and evaluate a specialized model, Gravity moved to a model that was faster, cheaper, and better suited to the task than the previous 70B setup.

What's next

Gravity will continue to scale on the custom fine-tuned model on Catalyst and plans to expand to more task-specific models. As traffic grows and new advertiser categories come online, they'll have new tasks the model needs to handle, and more production data to train on. The training pipeline is already in place, so as needs change and patterns in traffic evolve, Gravity can keep retraining and shipping better models using Catalyst without changing their entire setup.

Train your own specialized models

Fine-tune and deploy AI on your production data. Lower cost, lower latency, specialized for your workload.

Meet with our research team

Schedule a call with our research team to learn more about how Specialized Language Models can cut costs and improve performance.

Other Customer Stories

We're creating a platform for progressive AI companies to build their products in the fastest, most performant infrastructure available.

How Olive Delivers Real-Time Food Verdicts on a Model It Owns

Olive is a consumer food transparency app that helps families scan groceries, understand ingredient risks, and make better food choices in real time.

How Cal AI reduced latency by 66% while improving reliability

Cal AI is the leading consumer nutrition app, letting millions of users log meals by snapping a photo, scanning a barcode, or describing what they ate.

How Wynd Labs Processes Videos at 95% Lower Cost

Wynd Labs operates Grass, a decentralized data network with millions of nodes that aggregates public web content into structured datasets for AI training and search.