Articles

Our team’s insights on building better AI systems.

Aug 28, 2025

The Ultimate LLM Benchmark Comparison Guide (2025 Edition)

Navigate the LLM landscape with our ultimate guide. Get a comprehensive LLM benchmark comparison for all top models in 2025.

Aug 27, 2025

What Is Inference Latency & How Can You Optimize It?

Reduce AI response time. Learn what inference latency is and discover powerful optimization techniques to boost your model's speed.

Aug 26, 2025

Top 22 LLM Performance Benchmarks for Measuring Accuracy and Speed

Evaluate LLMs with our guide to the top 22 LLM performance benchmarks. Measure accuracy, speed, and overall capabilities with precision.

Aug 25, 2025

What are Serving ML Models? A Guide with 21 Tools to Know

Learn about Serving ML Models and get our expert guide to 21 top tools. Deploy your models for real-time predictions and scalable applications.

Aug 24, 2025

Step-By-Step LLM Serving Guide for Production AI Systems

A complete guide to LLM Serving. Learn how to deploy large language models to production with our step-by-step tutorial.

Aug 23, 2025

20 Proven LLM Performance Metrics for Smarter AI Evaluation

Evaluate your AI models with precision. Learn about 20 essential LLM performance metrics to ensure accuracy, relevance, and safety.

Aug 22, 2025

KV Cache Explained with Examples from Real World LLMs

Learn what KV Cache is and why it's vital for LLMs. Our guide to KV Cache explained with real-world examples.

Aug 20, 2025

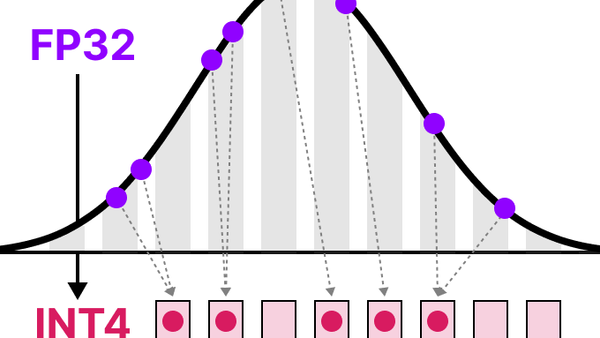

A Practical Guide to Post Training Quantization for Edge AI

Post Training Quantization (PTQ) reduces model size, improves latency, and preserves accuracy, making it a key technique in model optimization.

Aug 18, 2025

Top 46 LLM Use Cases to Boost Efficiency & Innovation

Boost efficiency & innovation! Explore 46 powerful LLM use cases across industries, from automation to content creation.

Aug 18, 2025

A Beginner’s Guide to LLM Quantization for AI Efficiency

Learn about LLM quantization and make AI models smaller and faster. Our beginner's guide demystifies this key efficiency technique.