Mar 9, 2026

Speculative Decoding: How It Works, Why It's Fast, and How to Use It

Inference Research

The Problem with One Token at a Time

Even on top-tier hardware, LLM inference spends most of its time waiting. Autoregressive decoding — the mechanism behind every modern large language model — generates tokens one at a time. Each token requires a full forward pass through every layer of the model. The next token can't start until the previous one finishes. On a single A100, a 70B-parameter model produces roughly 30–50 tokens per second. Users notice any latency above roughly 100ms per token in an interactive chat session.

That sequential bottleneck is the root problem that speculative decoding was designed to solve. Instead of waiting for the large model to generate one token at a time, speculative decoding lets a small, fast draft model sprint ahead and guess the next several tokens — then hands those guesses to the large model for verification in a single parallel pass. The result: 2–4× faster inference with no change to output quality.

This guide takes you from the core idea to a working production setup. You'll see exactly how the algorithm works, how each major variant (EAGLE-2, Medusa, Lookahead, Prompt Lookup) differs, what real benchmarks show, and how to implement it with HuggingFace Transformers and vLLM — with working code for both.

Read time: 18 minutes

---

What Is Speculative Decoding?

The core insight behind speculative decoding comes down to one observation: a transformer model's forward pass over K tokens takes nearly the same wall-clock time as a forward pass over 1 token, because the bottleneck is memory bandwidth — reading model weights from HBM into compute units — not floating-point operations per se. If you can fill that forward pass with K useful tokens to check instead of just 1 to generate, you reclaim parallelism that autoregressive decoding leaves on the table.

Speculative decoding is an inference acceleration technique for large language models in which a small, fast draft model generates several candidate tokens, and a larger target model verifies them in a single parallel forward pass — accepting correct tokens and resampling incorrect ones to preserve output quality.

The target model doesn't change its weights, its sampling strategy, or its outputs. It simply runs its forward pass over a richer input: the original context plus the candidate tokens from the draft model. At each candidate position, the target model produces a probability distribution and compares it to what the draft model predicted. Tokens that the draft model predicted with sufficient fidelity are accepted; any mismatch triggers a correction step that samples from the residual distribution. The net effect is that you accept multiple tokens per verification cycle instead of one — with the final output distribution provably identical to standard decoding.

This idea arrived independently from two research groups in 2023. Leviathan et al. published "Fast Inference from Transformers via Speculative Decoding" at ICML 2023, while Chen et al. published "Accelerating Large Language Model Decoding with Speculative Sampling" around the same time. Both papers derived the same acceptance-rejection mechanism and proved its output equivalence. Both teams independently derived the same mechanism — a sign that the solution follows naturally once you understand the bottleneck.

Speculative decoding differs from several techniques that often come up in the same conversation:

- Beam search generates multiple candidate sequences and keeps the best — this changes the output distribution. Speculative decoding doesn't change the distribution at all.

- Batching helps throughput by processing many independent requests together. It doesn't reduce latency for any single request. Speculative decoding targets per-request latency.

- Quantization reduces model size and increases throughput by using lower-precision arithmetic, at some quality cost. Speculative decoding is orthogonal to quantization — you can use both simultaneously.

- KV cache stores computed key-value pairs across the context so the model doesn't recompute them on every step. Speculative decoding works with KV caching — they address different parts of the inference bottleneck.

The accept-reject mechanism is where the algorithm's guarantees live. Here's how it works.

---

How Speculative Decoding Works

Walking through each step shows exactly when and why the speedup occurs:

- Draft phase. Given the current context, the draft model autoregressively generates K candidate tokens (typical values: K = 4–8). This is fast because the draft model is 10–20× smaller than the target model — think a 7B model drafting for a 70B target.

- Score phase. The target model runs a single forward pass over the concatenated sequence

[context + K draft tokens]. Because the attention mask is causal, this single pass produces independent probability distributions for each of the K positions simultaneously. - Verify phase. For each draft token at position i, compare the target model's probability p(x_i) to the draft model's probability q(x_i). Accept the draft token with probability min(1, p(x_i) / q(x_i)). If p ≥ q, accept unconditionally; if p < q, accept with probability p/q and reject otherwise.

- Correction phase. At the first rejected position (say, position j), sample the next token from a corrected distribution: max(0, p(x) − q(x)), normalized. This correction step is what makes the output provably identical to what the target model would have produced alone. After all K tokens are accepted, sample one additional token unconditionally from the target distribution.

- Advance and repeat. Slide the context window forward by the number of accepted tokens plus one (the correction/bonus token), then start a new draft cycle from the updated context.

The key metric controlling speedup is the acceptance rate α — the per-token probability that a draft token is accepted by the target model. At α = 0.9, you'd expect to accept nearly all K draft tokens per cycle; at α = 0.5, the expected accepted tokens per cycle drops to roughly 1.4, making speculative decoding only marginally faster than standard decoding. The relationship isn't linear: a higher acceptance rate compounds because each accepted token shifts the context forward, giving the next draft cycle a better starting point.

Output quality is provably identical because of the correction step. After any rejection at position j, sampling from max(0, p − q) normalized ensures the marginal distribution over token j is exactly p(x_j | context) — the same distribution the target model would have produced through standard decoding. The induction holds for all positions, so the joint distribution over the entire generated sequence is identical to what the target model alone would produce. For greedy decoding (temperature = 0), this means outputs are bit-for-bit identical; for sampling, the distribution matches.

There is one hard constraint: the draft and target models must share the same tokenizer. Because the verification step compares token probabilities position by position, both models must be operating over the same vocabulary. A mismatched tokenizer produces nonsense comparisons and corrupts outputs. For most deployments, this means same-family models — a Llama-3 draft for a Llama-3 target, or a Mistral-Small draft for a Mistral-Large target.

The sizing rule of thumb is a 10–20× size ratio between target and draft. If the draft is too small relative to the task, acceptance rates collapse and you gain nothing from the parallelism. If the draft is too large, the drafting cost itself starts to eat the speedup. For a 70B target, a 3B–8B draft is the practical sweet spot. For a 13B target, a 1B draft works well.

---

Speculative Decoding Variants: EAGLE, Medusa, Lookahead, and More

Vanilla speculative decoding requires deploying a second model alongside the target — adding VRAM, loading time, and operational complexity. Several variants address this limitation or push performance beyond the baseline. Knowing which to reach for depends on your model family, your infrastructure, and whether you have a fine-tuning budget.

Standard Speculative Decoding

The baseline: a separate draft model of the same family as the target, sharing its tokenizer. This approach achieves the highest acceptance rates when a strong same-family smaller model exists — for example, Llama-3-8B-Instruct drafting for Llama-3-70B-Instruct. The operational cost is running two models in memory simultaneously. If you have the VRAM headroom, this is often the simplest production path.

EAGLE and EAGLE-2

EAGLE (Li et al., 2024) eliminates the separate draft model by training a lightweight autoregressive head that sits on top of the target model's own feature vectors. Instead of a second model predicting next tokens from scratch, the EAGLE head predicts the target model's next hidden states — a much easier task because it has access to the target's internal representations. This dramatically improves acceptance rates compared to a small independent model.

EAGLE-2 extends this with dynamic draft trees: instead of always drafting exactly K tokens in a linear chain, it builds a tree of possible continuations and expands branches adaptively based on the target model's confidence in each path. This context-aware speculation length squeezes additional efficiency from high-certainty generations.

Measured speedups: up to 3.0–3.8× on Vicuna-13B and Llama-3-70B on MT-Bench tasks. Pre-trained EAGLE heads are available for the major open model families (Llama, Vicuna, Mistral) via the official EAGLE repository. For supported models, this is the strongest variant available without writing custom code.

Medusa

Medusa (Cai et al., 2024) takes a different structural approach: it adds multiple parallel decoding heads to the target model, where each head is trained to predict the token at offset +1, +2, ..., +K from the current position. Verification uses tree attention — a single forward pass that evaluates all candidate combinations simultaneously.

The key advantage: Medusa heads are fine-tuned on top of the target model itself, requiring no separate model and relatively modest training compute (roughly 1–2 GPU-days on a dataset representative of your use case). Speedups average 2.2–2.8×. Medusa is natively supported in HuggingFace Transformers and vLLM, making it straightforward to deploy once heads are trained.

Lookahead Decoding

Lookahead Decoding (Fu et al., 2024) takes the opposite philosophy: no model and no training at all. It builds an n-gram cache from the model's own generation history and uses n-gram matches to propose candidate continuations. When the current context matches a previously seen n-gram, those cached tokens become candidates for the next cycle.

The tradeoff is lower average speedup (roughly 1.5–2×) compared to model-based methods. But Lookahead is zero-cost to deploy and requires no infrastructure changes — making it a useful baseline or fallback for any model where no draft model or EAGLE head exists.

Prompt Lookup Decoding

A highly effective special case: instead of n-grams from generation history, this method finds n-gram matches within the input prompt itself and uses those as candidates. It's extraordinarily effective for tasks where the output closely mirrors the input — document editing, code completion, summarization, retrieval-augmented generation. For code tasks specifically, speedups can reach 3× or more. It's available in HuggingFace Transformers via the prompt_lookup_num_tokens parameter, requiring literally one argument change.

Self-Speculative Decoding

Uses early-exit layers of the target model as an implicit draft — the model runs a few layers, produces a candidate, then verifies with the full depth. The upside is no extra model or training; the downside is significant custom engineering to implement early exits cleanly, and acceptance rates that depend heavily on task and model architecture.

| Variant | Draft Source | Speedup Range | Training Required | Key Requirement | Best For |

|---|---|---|---|---|---|

| Standard | Separate same-family model | 2.0–2.8× | None | Same tokenizer + model family | Target has a strong small sibling |

| EAGLE-2 | Lightweight head on target's features | 3.0–3.8× | Head training (~1 GPU-day) | Pre-trained head or training budget | Max performance on supported models |

| Medusa | Multiple heads fine-tuned on target | 2.2–2.8× | Heads fine-tuning (1–2 GPU-days) | Representative fine-tuning dataset | Strong gains without a separate model |

| Lookahead | n-gram cache from generation history | 1.5–2.0× | None | None | Zero-setup fallback for any model |

| Prompt Lookup | n-gram matches within input prompt | 1.5–3.0× | None | Prompt-heavy or repetitive input | Document editing, code, RAG pipelines |

Choosing a variant depends on what you have available: if a pre-trained EAGLE head exists for your model, use EAGLE-2 — it delivers the best performance with the least infrastructure overhead. If you can fine-tune, Medusa is a strong option. If you need something running today with no training overhead, Prompt Lookup Decoding or Lookahead Decoding get you there immediately.

---

Performance: How Fast Is Speculative Decoding in Practice?

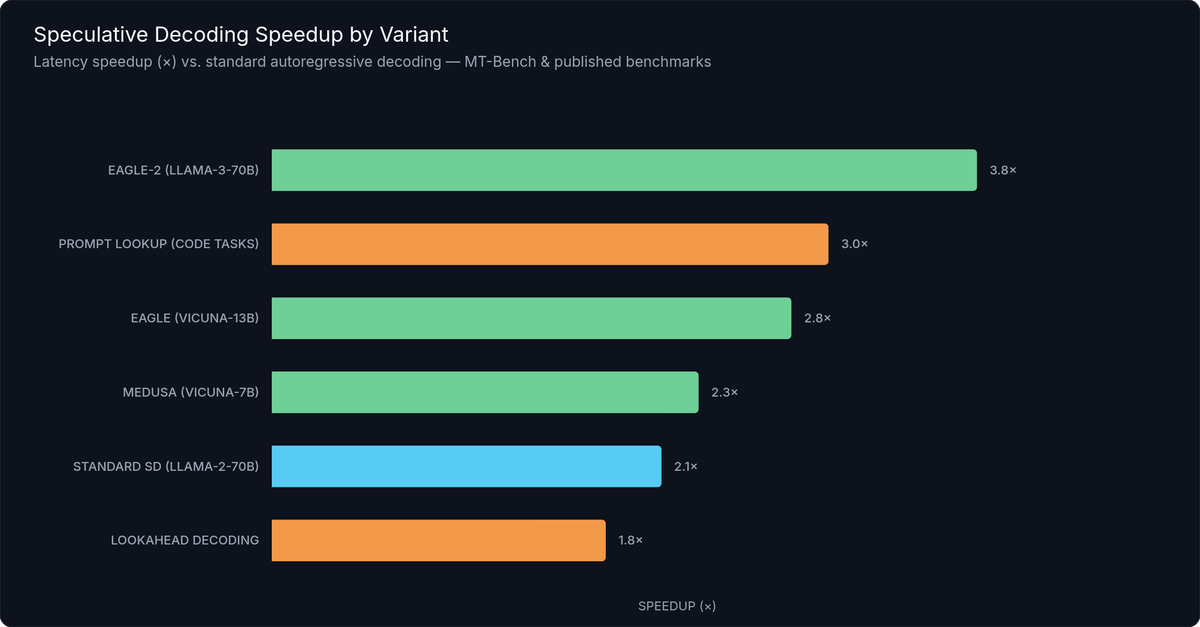

Published benchmarks across the major variants show speculative decoding reliably delivers 1.5–4× latency improvement on single-stream inference, with the specific range depending on variant, model size, and task type.

Here are the key data points from published benchmarks:

- Standard speculative decoding (Llama-2-70B + Llama-2-7B draft): ~2.1× speedup on MT-Bench

- EAGLE (Vicuna-13B): ~2.8× speedup on MT-Bench

- EAGLE-2 (Llama-3-70B): ~3.8× speedup on MT-Bench

- Medusa (Vicuna-7B): ~2.3× speedup on MT-Bench

- Lookahead Decoding: ~1.8× average (higher on code-generation tasks)

- Prompt Lookup Decoding on code tasks: up to 3.0× due to high repetition in code completions

Figure 1: Speculative decoding speedup comparison across major variants — MT-Bench benchmarks, single-stream inference

These numbers represent latency speedup — tokens per second for a single generation stream — not aggregate throughput across many concurrent requests. That difference is critical when planning a production deployment.

The acceptance rate is the dominant variable under the speedup formula. A useful approximation is:

speedup ≈ (1 + K · α) / (1 + K · r)where α is the per-token acceptance rate, K is the draft length, and r is the cost of one draft forward pass relative to one target forward pass. Given the 10–20× size difference, r is typically 0.05–0.1, making the denominator only slightly above 1. At α = 0.8 and K = 5, you'd expect roughly a 5-token accepted sequence per cycle — translating to roughly 2–3× depending on the cost ratio. At α = 0.4, the formula yields near-1× — effectively no speedup.

Task type is the clearest predictor of α. Code generation sees the highest acceptance rates because tokens are more constrained by syntax and semantics: the correct next token is often the only reasonable token. Structured output (JSON, XML, templates) behaves similarly. Creative writing with high temperature sees the lowest acceptance rates, because the output is intentionally less predictable and the draft model's guesses align less often with the target model's preferences.

One important caveat: these speedups apply specifically to single-stream or low-batch inference. At batch sizes of 32 or more, the GPU's memory bandwidth is already saturated by the batch itself. The draft model's token proposals create overhead without any corresponding benefit — speculative decoding can actually slow things down in high-throughput batched serving. The break-even batch size is typically 8–16 concurrent requests; above that, continuous batching alone is the better optimization.

---

Implementing Speculative Decoding with HuggingFace Transformers

HuggingFace Transformers has supported speculative decoding natively since version 4.38 via the assistant_model parameter in generate(). The barrier to entry is a single argument change.

Prerequisites

transformers >= 4.38- Both target and draft model share the same tokenizer vocabulary

- Enough GPU VRAM for both models (use

device_map="auto"to distribute across multiple GPUs or rely on CPU offloading if needed)

Basic Setup: assistant_model

The assistant_model parameter tells the generate() method to use speculative decoding with the provided smaller model as the draft:

from transformers import AutoModelForCausalLM, AutoTokenizer

import torch

import time

# Load target model

model = AutoModelForCausalLM.from_pretrained(

"meta-llama/Meta-Llama-3-70B-Instruct",

torch_dtype=torch.float16,

device_map="auto",

)

# Load draft model — same family, same tokenizer, much smaller

assistant_model = AutoModelForCausalLM.from_pretrained(

"meta-llama/Meta-Llama-3-8B-Instruct",

torch_dtype=torch.float16,

device_map="auto",

)

# Always use the target model's tokenizer for both models

tokenizer = AutoTokenizer.from_pretrained("meta-llama/Meta-Llama-3-70B-Instruct")

inputs = tokenizer("The key to faster LLM inference is", return_tensors="pt").to("cuda")

# Baseline: standard autoregressive decoding

start = time.time()

baseline_outputs = model.generate(**inputs, max_new_tokens=200, do_sample=False)

baseline_time = time.time() - start

# Speculative decoding: pass the draft model as assistant_model

start = time.time()

outputs = model.generate(

**inputs,

assistant_model=assistant_model,

max_new_tokens=200,

do_sample=False, # greedy; set True with temperature for sampling

# num_assistant_tokens=5 # uncomment to hard-code draft length; default is adaptive

)

spec_time = time.time() - start

print(tokenizer.decode(outputs[0], skip_special_tokens=True))

print(f"Speedup: {baseline_time / spec_time:.2f}×")The three parameters to know:

- `assistant_model`: The draft model instance. Must share tokenizer vocabulary with the main model — never mix model families here.

- `num_assistant_tokens`: How many tokens the draft model generates per cycle. Defaults to an adaptive mode that adjusts based on observed acceptance rate — generally the best option. Hard-code this (e.g.,

num_assistant_tokens=5) when you want predictable latency behavior. - `assistant_early_exit`: For self-speculative decoding using early exit layers of the target model itself, where the model architecture supports it. Requires model-specific support.

Always measure your actual acceptance rate before committing to a draft model choice. Wrap generate() with time.time() and compare token generation speed against a baseline run without assistant_model. The theoretical speedup from the formula above is a starting point, not a guarantee — real acceptance rates vary by prompt domain, system prompt length, and sampling temperature.

Prompt Lookup Decoding: Zero Extra Models

If you don't have a draft model available, Prompt Lookup Decoding requires nothing beyond a single extra argument:

outputs = model.generate(

**inputs,

prompt_lookup_num_tokens=10, # scan the prompt for matching n-grams up to length 10

max_new_tokens=200,

)This is the fastest path to measurable speedup for summarization, code editing, RAG pipelines, and any task where the output recombines content from a long input. For pure chat or creative tasks, gains will be modest.

HuggingFace also integrates EAGLE heads via the same assistant_model API — you load the EAGLE-patched model checkpoint as the assistant, and the library handles the tree-attention verification automatically. Check the EAGLE official repository for compatible model checkpoints.

---

Speculative Decoding in vLLM and Other Frameworks

For production LLM serving, most teams reach for a dedicated inference framework rather than raw HuggingFace. Speculative decoding is a first-class feature across all the major options.

vLLM

vLLM is the dominant open-source LLM serving framework, and it has had native speculative decoding support since version 0.3. Using it via the Python API takes a handful of lines:

from vllm import LLM, SamplingParams

llm = LLM(

model="meta-llama/Meta-Llama-3-70B-Instruct",

speculative_model="meta-llama/Meta-Llama-3-8B-Instruct",

num_speculative_tokens=5, # K: draft tokens per cycle; tune based on task

)

sampling_params = SamplingParams(temperature=0.0, max_tokens=200)

outputs = llm.generate("The key to faster inference is", sampling_params)

print(outputs[0].outputs[0].text)For the OpenAI-compatible HTTP server, speculative decoding is a pair of launch flags — no code change required for clients:

vllm serve meta-llama/Meta-Llama-3-70B-Instruct \

--speculative-model meta-llama/Meta-Llama-3-8B-Instruct \

--num-speculative-tokens 5Once the server is running, your existing OpenAI SDK calls work unchanged. The speculative decoding happens transparently inside vLLM's scheduler and block manager. For a production vLLM serving guide, including memory management and batching configuration, see our dedicated article.

Other Frameworks

| Framework | SD Support Since | Supported Methods | Config Style | Notes |

|---|---|---|---|---|

| HuggingFace Transformers | v4.38 | Standard, Prompt Lookup, EAGLE | assistant_model param in generate() | Adaptive K via num_assistant_tokens |

| vLLM | v0.3 | Standard, Medusa, EAGLE | Python API + CLI flags | Best production serving option |

| TGI | v1.4 | Standard | --speculate N launch flag | One-flag activation for existing TGI deployments |

| TensorRT-LLM | 2024 | Standard, Medusa | SpeculativeDecodingMode enum | Best raw performance; requires TRT compilation |

| SGLang | 2024 | Standard | --spec-draft-model flag | Good fit for structured output workloads |

TGI (Text Generation Inference) by HuggingFace supports speculative decoding via the --speculate N flag since v1.4. If you're already running TGI, enabling it is a one-line change to your launch command.

TensorRT-LLM from NVIDIA exposes speculative decoding through its SpeculativeDecodingMode enum and supports both draft-target model pairs and Medusa heads. It delivers the strongest raw performance for teams locked to NVIDIA hardware who are willing to handle TensorRT compilation and the associated build complexity.

SGLang supports speculative decoding via --spec-draft-model at server launch. SGLang's runtime-efficient architecture pairs well with speculative decoding for structured output generation in particular.

The same constraints apply across frameworks: same-family draft model, shared tokenizer, and low-to-moderate batch sizes. Framework support is mature enough that you don't need to implement speculative decoding from scratch in any standard deployment scenario.

---

Choosing the Right Draft Model

The draft model choice has the biggest single impact on whether speculative decoding actually helps. A good match delivers near-maximum speedup; a poor one adds complexity for no gain.

The Core Rules

Same model family, always when possible. A Llama-3-8B-Instruct draft for a Llama-3-70B-Instruct target will dramatically outperform any cross-family pairing. The reason is distribution alignment: models trained on the same data pipeline with the same tokenizer produce much more similar probability distributions, so the draft model's guesses align with the target model far more often. Cross-family pairings (e.g., Gemma draft for Llama target) often yield acceptance rates too low to be worthwhile.

10–20× size ratio. The draft model needs to be fast enough that generating K tokens costs significantly less than one target model forward pass. For a 70B target, that means a 3B–8B draft. For a 13B target, 1B is the right range. Going larger than 1/10th the target size risks the draft eating your speedup; going smaller than 1/20th risks poor acceptance rates.

Shared tokenizer is non-negotiable. Both models must use exactly the same tokenizer — the same vocabulary, the same special tokens, the same byte-pair encoding rules. This is typically enforced automatically by staying within the same model family, but always verify before deploying: load both tokenizers and confirm their vocab_size and special_tokens_map match.

Instruct vs. base draft model. For instruction-following and chat applications, use an instruct-tuned draft model. The fine-tuning on instruction data shifts its distribution closer to what a chat-optimized target model produces — meaningfully higher acceptance rates on formatted prompts.

Common Proven Pairs

| Target Model | Recommended Draft | Size Ratio | Expected Speedup | Notes |

|---|---|---|---|---|

| Llama-3-70B-Instruct | Llama-3-8B-Instruct | ~8.75× | 2.5–3.0× | Best-in-class pairing for Llama-3 family |

| Llama-3-8B-Instruct | EAGLE head or Prompt Lookup | — | 2.5–3.5× (EAGLE) | No strong same-family smaller model |

| Mistral-7B-Instruct | EAGLE head or Prompt Lookup | — | 2.0–3.0× (EAGLE) | No official draft sibling; EAGLE heads available |

| Qwen2-72B-Instruct | Qwen2-7B-Instruct | ~10× | 2.0–2.5× | Good size ratio within family |

| Gemma-2-27B-IT | Gemma-2-9B-IT | ~3× | 1.8–2.2× | Lower ratio; still worthwhile for latency-sensitive deployments |

| CodeLlama-34B | CodeLlama-7B | ~5× | 2.0–2.5× | Shared code-focused training boosts acceptance rates |

When No Good Draft Exists

Some model families don't have a small same-family sibling. For these situations:

- Prompt Lookup Decoding is the zero-overhead default for document-heavy or code tasks. It requires no draft model and is available in both HuggingFace and vLLM today.

- EAGLE heads — if pre-trained heads exist for your model in the EAGLE repository, this is the highest-performance path and eliminates the separate model requirement entirely.

- Medusa heads — if you can run 1–2 GPU-days of fine-tuning on a representative dataset, Medusa heads deliver 2–2.8× speedup with no separate model in production.

The fallback hierarchy is: Prompt Lookup → EAGLE (if head exists) → Medusa (if you can fine-tune) → separate draft model (if one exists in the family).

---

When to Use Speculative Decoding — and When to Skip It

Speculative decoding is a latency optimization, not a throughput optimization. Mixing these up is how you end up adding infrastructure complexity with nothing to show for it.

Use Speculative Decoding When

You're optimizing for per-request latency. Chatbots, coding assistants, document editors, and any interactive application where the user is actively waiting for the model's response — these are exactly the use cases speculative decoding was designed for. Shaving 300ms off each response cycle is noticeable and valuable.

Batch sizes are low. For single-stream inference or up to ~8 concurrent requests, speculative decoding delivers its full benefit. The memory bandwidth freed by the draft-verify cycle translates directly to faster per-request completion.

The task produces predictable tokens. Code generation, structured output (JSON schemas, templates), document summarization, and retrieval-augmented generation all feature high token predictability — the conditions where acceptance rates stay high and speedups are maximized.

A high-quality draft model exists. Specifically, a same-family model at 1/10th to 1/20th the target size. This is the scenario where standard speculative decoding achieves 2–3× gains consistently.

Skip Speculative Decoding When

You're serving high-concurrency, high-throughput workloads. At batch sizes of 32 or more, the GPU is already fully utilizing its memory bandwidth through continuous batching. Adding a draft model introduces additional forward passes that create overhead without benefit — you may actually see lower aggregate throughput. Continuous batching alone is the right tool here.

VRAM is your binding constraint. Both the target and draft model must fit in GPU memory simultaneously (or be aggressively split across GPUs, which introduces communication overhead). If memory is already tight, the draft model may not be a viable addition.

Generation temperature is high and the task is open-ended creative work. High temperature means intentionally high token diversity. The draft model's predictions will mismatch the target model's high-entropy samples more often, collapsing acceptance rates. Below ~0.6× speedup, the engineering overhead isn't justified.

The draft model is too large. If you're tempted to use a 34B draft for a 70B target because you want high acceptance rates, resist: the cost ratio will eat your gains. The verification overhead with a large draft can make speculative decoding net-negative.

A Note on Continuous Batching Compatibility

Speculative decoding and continuous batching are orthogonal features. Frameworks like vLLM and TGI support both simultaneously: the scheduler uses continuous batching to manage request queues, while speculative decoding accelerates individual request completion. You don't have to choose one over the other — the right configuration is usually continuous batching enabled at the framework level, with speculative decoding toggled on for low-concurrency endpoints and off for high-concurrency batch endpoints.

---

Frequently Asked Questions About Speculative Decoding

Does speculative decoding change the model's outputs?

No. The acceptance sampling algorithm is mathematically proven to produce a distribution identical to what the target model would generate alone. For greedy decoding (temperature = 0), outputs are bit-for-bit identical. For sampling with temperature > 0, the output distribution is equivalent — each token's marginal probability matches what the target model would produce through standard sampling.

Can I use speculative decoding with quantized models?

Yes. Both the target and draft models can be quantized independently — for example, the target model in INT8 and the draft model in INT4. Mixed-precision pairs work correctly as long as the tokenizer is shared. Quantized speculative decoding is common in production deployments where memory is the limiting factor, and the combination of quantization and speculative decoding is additive: you get the memory savings from quantization and the latency savings from speculative decoding simultaneously.

How many speculative tokens (K) should I use?

The range 4–8 is the sweet spot for most production tasks. More tokens per draft cycle means more potential gain but a higher penalty when a rejection occurs early in the chain — the entire remaining draft is discarded. HuggingFace's adaptive mode (num_assistant_tokens left at default) dynamically adjusts K based on observed acceptance rate, which is generally the best option unless you need deterministic latency behavior. vLLM's num_speculative_tokens parameter gives you explicit control.

Does speculative decoding work with streaming responses?

Yes. Both vLLM and TGI support streaming with speculative decoding active. Tokens are streamed as they are accepted within each draft cycle, so users perceive lower latency: the first tokens arrive sooner, and the cadence of delivery feels smoother than standard autoregressive streaming at the same average tokens-per-second rate.

How is speculative decoding different from beam search?

Beam search generates multiple candidate sequences simultaneously and retains the highest-scoring one — explicitly changing the output distribution by selecting a maximum-likelihood path rather than sampling freely. Speculative decoding generates candidates only to verify them against the target model's distribution; the final output distribution is statistically identical to single-sequence sampling from the target model alone. Beam search trades quality (in a specific, deterministic sense) for coherence; speculative decoding trades none of the quality for latency.

---

Speculative Decoding Is Ready for Production

Speculative decoding is production-ready. The algorithm delivers 2–4× latency improvement with no quality penalty.

The decision hierarchy: if no draft model is available, start with Prompt Lookup Decoding for zero setup cost. If a same-family smaller model exists, use standard speculative decoding as your reliable baseline. When you need maximum performance and have either a pre-trained EAGLE head or a fine-tuning budget, upgrade to EAGLE-2 or Medusa for the top of the 3–4× range.

One caveat: below ~8 concurrent requests, enable speculative decoding. Above that, continuous batching is already doing the heavy lifting and speculative overhead becomes a liability.

If you're deploying at scale and want speculative decoding pre-configured with optimized draft model pairing, inference.net offers production-grade hosted inference with latency optimization built in — without the overhead of managing two models, tuning draft length, and tracking acceptance rates.

Related guides: LLM quantization, KV cache optimization, and continuous batching.

---

References

- Leviathan, Y., Kalman, M., & Matias, Y. (2023). Fast Inference from Transformers via Speculative Decoding. ICML 2023. https://arxiv.org/abs/2211.17192

- Chen, C., Borgeaud, S., Irving, G., Lespiau, J.-B., Sifre, L., & Jumper, J. (2023). Accelerating Large Language Model Decoding with Speculative Sampling. arXiv. https://arxiv.org/abs/2302.01318

- Li, Y., Wei, F., Zhang, C., & Zhang, H. (2024). EAGLE: Speculative Sampling Requires Rethinking Feature Uncertainty. ICML 2024. https://arxiv.org/abs/2401.15077

- Li, Y., Wei, F., Zhang, C., & Zhang, H. (2024). EAGLE-2: Faster Inference of Language Models with Dynamic Draft Trees. arXiv. https://arxiv.org/abs/2406.16858

- Cai, T., Li, Y., Geng, Z., Peng, H., & Dao, T. (2024). Medusa: Simple LLM Inference Acceleration Framework with Multiple Decoding Heads. ICML 2024. https://arxiv.org/abs/2401.10774

- Fu, Y., Bailis, P., Stoica, I., & Zhang, H. (2024). Break the Sequential Dependency of LLM Inference Using Lookahead Decoding. ICML 2024. https://arxiv.org/abs/2402.02057

- vLLM Project. (2024). Speculative Decoding. vLLM Documentation. https://docs.vllm.ai/en/latest/features/spec_decode.html

- HuggingFace. (2024). Speculative Decoding. HuggingFace Transformers Documentation. https://huggingface.co/docs/transformers/generation_strategies#speculative-decoding

Meet with our research team

Schedule a call with our research team to learn more Specialized Language Models can cut costs and improve performance.